Decoding Request Queueing in Rails

From VMs to Kubernetes

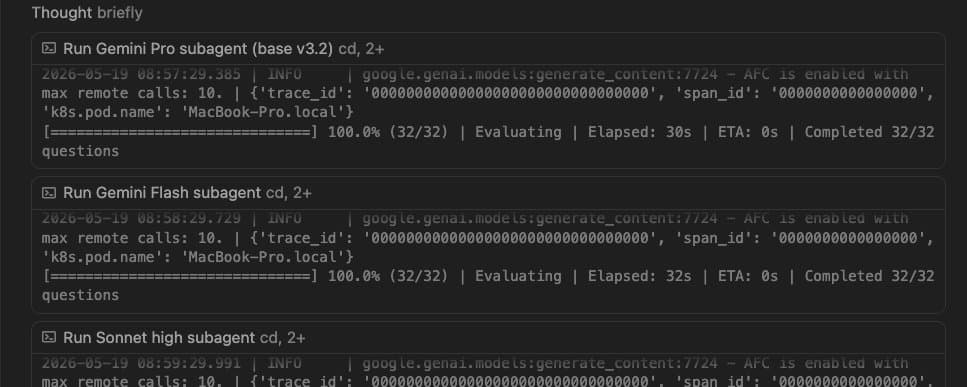

Recently We migrated our backend services from a traditional virtual machine (VM) infrastructure to a containerized environment managed by Kubernetes (K8s). During this transition, we hit a roadblock where during high load the Rails services would stop responding. On further investigation, we found out that after a while all sockets were left in the CLOSE_WAIT state and the processes just stopped accepting any more requests. We were using Unicorn as our app server. So we decided to move to Puma as well during this transition hoping to solve this issue.

Throughout this process, I recognized that my understanding of how and where the requests were queued was, at best, incomplete. In order to understand this I started going through the Puma codebase as well and learned a fair bit on how web servers actually work. In this post, I will try to explain a little about how queueing actually happens and how we can use this knowledge to build more robust services.

Rethinking Request Queueing

Many people usually have a somewhat incorrect mental model of where queueing actually happens. Let's try to fix that first!

In almost all web application setups a request is first accepted by a load balancer(LB). The load balancer then forwards the request to the downstream listening servers for processing. Usually, it uses a random round-robin algo for this.

It is very important to note that queueing does not happen at the LB!

The request is sent to the listener (can be a physical server, K8 pod, Heroku dyno...) which accepts the request using a socket. This happens at the OS layer. The OS kernel accepts the connection and that's where the queueing begins. The connection then waits in the socket backlog until it's accepted by one of the running processes (unicorn, Puma...).

The socket backlog is where 99% of the queueing happens as it's waiting for the process to get free and pick up the request. The default backlog size for TCP sockets on Linux is 128(which can be easily increased).

Puma's Inner Workings

Now, let's dive a little into how the listener works. I will use Puma as an example here.

Puma is what's known as a Pre-Forking Web server(so is unicorn). What that means is that Puma boots a master process that listens on a socket for incoming connections. It then calls fork(a system call that creates a duplicate child process of the current process.) and creates N number of child process(workers) which listens on the same socket. The master process closes the connection to the socket once the N workers are booted and these workers all listen on the same socket.

Just to be clear, the master process's only job is to manage the workers and it does not process any request itself.

Once a request comes, the OS randomly assigns it to one of the workers who are free and ready. Once a worker gets a request it stops listening on the socket and won't get another request till it's free and ready.

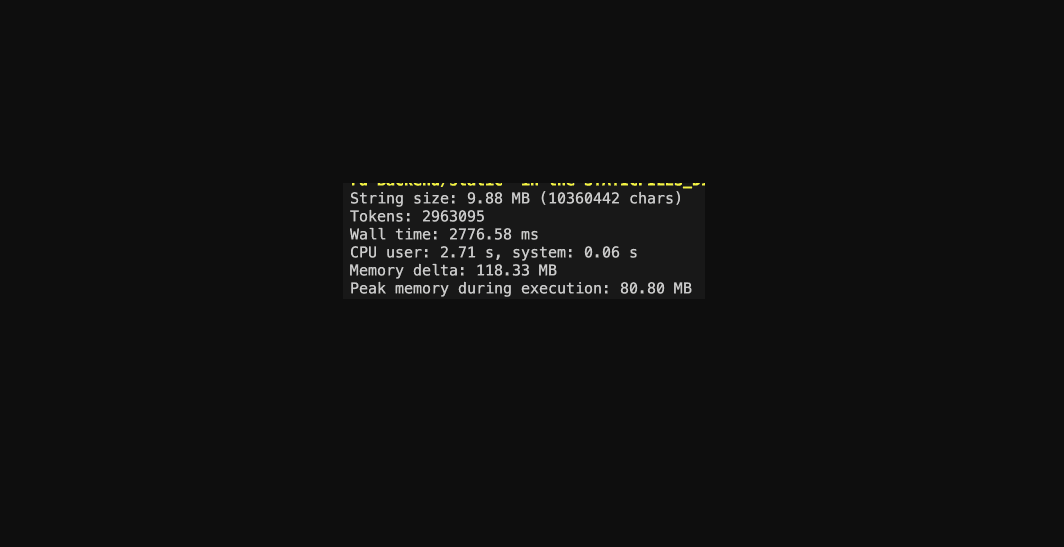

Deciphering Queue Time

Let me define Queue time first

Queue time is the time spent before getting picked up by the application code. It includes network time between the load balancer, router, and pods, and it includes time spent waiting for an available app process within a pod. This metric is a direct reflection of server capacity, rather than app performance.

Now let's take 2 cases to understand this better.

Case 1: There are 2 systems A and B. A has 1 worker and B has 4 workers.

Which will have a lesser queuing time?

Of course, the answer is B. Both systems have a single queue but B has 4 consumers, hence the queuing time will be less.

Case 2: How about this case? Here both systems have a similar number of total processes ie. 4

| A | B |

| 4 pod | 1 pod |

| 1 worker per pod | 4 workers |

Here B will have much lower queue time, almost 1/4th of A.

This is a result of queueing theory. According to it

Queue time = 1/s where s is the number of servers.

This is happening because in A there are 4 queues and each queue has 1 server while B has 1 queue and 4 servers, hence 1/4th.

This is also something a lot of people have a misconception about. A general rule of thumb is 1 process per container, but this is not the case for pre-forking web servers(like Unicorn or Puma). Instead, we can modify this to 1 master process per container.

Ideally one should run at least 4 workers per pod to minimize the the time spent for queueing for a free Puma worker.

In summary, understanding the intricacies of request queueing and the role of web servers like Puma can significantly improve the resilience and efficiency of your Rails services.