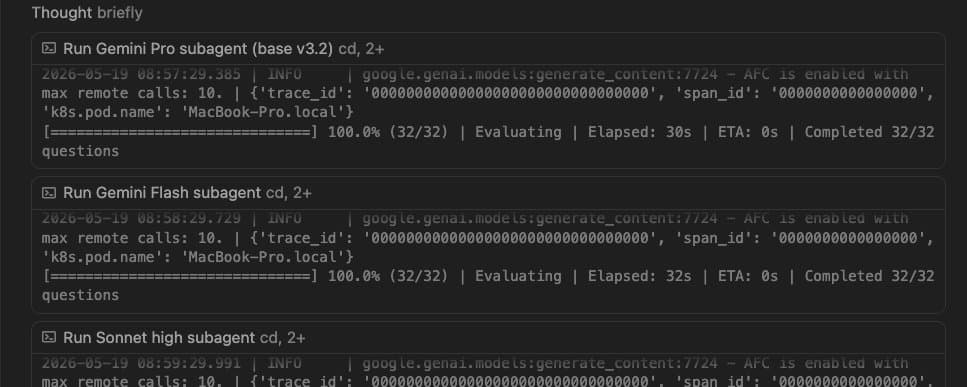

Not All Caches Are Equal: Claude, OpenAI, and Gemini

We focus quite a bit on prompt caching @LittlebirdAI to ensure lower latencies and cost. But it's very tricky to get it right, esp when you deal with multiple providers. There are quite a few really good blogs on how KV caching works and general tips and tricks around it. But It only get's harder from there. I'll try to share more stuff from the wild, how it aactually looks like trying to get it "right".

In terms of flexibility(how much can you control) and transparency(how it works in different cases, best practicies), etc:

Claude > OpenAI > Gemini

Calude gives the most amount of flexibility with implicit(this is basically syntactic sugar over explicit) & explicit, cache breakpoints, what exactly invalidates the cache, etc. Their docs are pretty detailed, cover a lot of different cases and are the most helpful. But that also means it becomes a bit more complex to understand and implement. But once you get the hang of it, it's worth it.

Second is OpenAI. Decent docs & info around how it works. Gives some control, but not enough like breakpoints. Also not very clear on changing what params breaks it, etc. A good middle ground IMO.

Third is Gemini. Very little documentation & hard to understand how to use it properly. The cache TTL is also not fixed. They have both explicit and implicit, but the dev-x of explicit is so bad, it's almost unusable. Even gemini-cli doesn't use it's explicit caching.

That being said, both OpenAI and Gemini don't charge "seprately" for caching. Claude does. IMO you can do much better controlled caching with Claude and maybe cover up the extra cost you pay for it. But it's tricky nonetheless.