Concurrency 101: Getting It Right Using Amdahl's Law

Some things are always inherently vague, like figuring out the exact amount of salt to put in a dish without a recipe. Setting the concurrency of your app might feel the same way!

Without a clear understanding of how concurrency impacts your application, there's a risk of over-engineering sections that might not necessarily require such complexity.

Just as every dish has its unique taste preference and every cook has their unique touch, each application has its unique workload and environment. Finding the right level of concurrency is like seasoning to taste – you might start conservatively and adjust based on the results, just as you might taste a dish and adjust the seasoning.

Why Does This Concurrency Stuff Matter Anyway?

Getting concurrency wrong in your app can lead to some serious hiccups. Overloading with too many concurrent threads can come with its own set of challenges:

- Resource Overhead: Each thread consumes memory. Over-allocating threads can lead to excessive memory usage,

- Contention: If multiple threads are competing for a shared resource for eg: GVL in Ruby (CRuby).

- Decreased Throughput: The overheads from excessive context switching and contention can actually decrease the throughput of the system.

Set the thread count too low and you suffer from:

- Underutilization of Resources

- Increased Latency: With fewer threads, tasks might have to wait in a queue before they can be processed.

- Reduced Throughput

It's like adding too much salt to a dish. Even though salt enhances flavor, using an excessive amount can overpower and ruin the meal. Similarly, too few grains of salt might render the dish tasteless. Finding the right balance in seasoning (or thread allocation) ensures the best taste (or performance) without overwhelming or underwhelming the palate (or system).

Amdahl's Law Explained: The Limits of Speeding Up Your Code

Amdahl's Law is a fundamental principle in parallel computing and is named after computer architect Gene Amdahl. It provides a theoretical framework to understand the potential speedup of a task when only a portion of it can be parallelized.

The formula for Amdahl's Law is:

- S (speedup) due to parallelization.

- P is the proportion of the program that can be parallelized (a value between 0 and 1). If 60% of a task can be parallelized, P would be 0.6.

- N is the number of processors (or threads, in our context).

Now before we jump into how we can use this to figure out the right configuration for our app, let's understand how we arrive at it.

Imagine you have a task that takes 1 unit of time to complete on a single processor. Now, if only a portion P of this task can be perfectly parallelized (i.e., split across multiple processors), and the remaining portion 1−P is strictly serial and cannot be parallelized at all, then:

- The serial portion will always take (1−P) units of time, regardless of how many processors you have.

- The parallelizable portion will take P/N units of time when split across N processors.

Hence, the total time taken is (1-P) + (P/N) for N processes.

The speedup due to parallelization is defined as the ratio of the execution time on a single processor to the execution time on N processors.

Hence,

S = 1/((1-P)+P/N)

The beauty of Amdahl's Law lies in its simplicity and the insights it provides:

The Non-Parallelizable Portion Limits Speedup: As N (number of threads) goes to infinity, the maximum speedup that can be achieved is 1/(1-P). This means even if you have unlimited threads, the non-parallelizable portion of your task will limit the maximum speedup you can achieve.

Diminishing Returns: As you add more threads, the incremental speedup you get from each additional thread diminishes. This is because the non-parallelizable portion of the task remains constant and unaffected by the increase in threads.

Enough of the groundwork; let's dive into its real-world application!

From Classroom Chalk to Production Talk

Now I will talk in the context of Ruby(CRuby/MRI), but you can apply a similar methodology to any system and fine-tune it.

In Ruby, threads can't run in parallel(due to how GVL works) but as per Ahmdal's law, we gain speedup only from additional parallelism. But Ruby threads work in parallel during IO operations(like those database calls or pinging external APIs).

To figure out what P (in this case % of time spent during IO) is in your application. We can use Pareto's principle (80% of work is done by 20% of the APIs) to quickly figure out an approximate value.

- Identify Your Main Ingredients: Just as in cooking, start with your primary components. Use any APM, I'll use New Relic. Order your transactions by the most time-consuming, taking the top 20% (akin to picking the freshest ingredients for our dish).

For this recipe, we're selecting the top 6.

- Time for Some Taste Testing: For every transaction, determine the time spent in IO. Think of it as tasting while cooking, making sure the flavors are balanced.

Our example, the api/v0/leads#fetch_all_leads transaction, shows around 24% time spent in the controller. The rest is a delicious mix of database interactions and external API calls. Repeat for the others.

Mixing the Flavors: With all the time percentages in hand, use weighted averages to get the total time percentage in IO.

In my case, it comes to around 57% IO time

In my case, it comes to around 57% IO timeThe Final Garnish: With the help of ChatGPT, visualize the relationship between Speedup and Number of Threads.

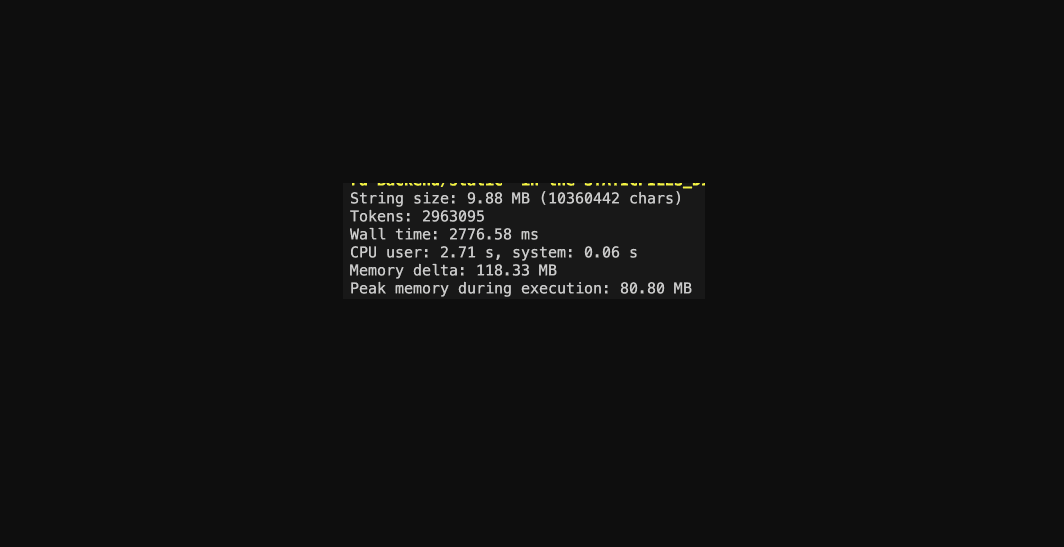

Currently, the application's concurrency is set at 4. If we nudge it up to 5, we can anticipate a theoretical speedup of 5.24%. And if we push it to 6? A notable 9.05% boost compared to our existing setup.

Tuning the DB Pool for Better Concurrency!

When adjusting concurrency settings, it's ESSENTIAL to keep an eye on your Database Pool size. Should your pool size fall short of your process-level concurrency (be it Puma thread count or Sidekiq concurrency), expect an uptick in latency. This happens as threads are left waiting for an available database connection.

A recommended approach for determining your DB_POOL size is to set it to the value of concurrency, plus 5. This is because Rails occasionally initiates its own threads, which in turn necessitate more connections.

A good way to handle this is by employing a single ENV variable for both configurations, as illustrated below:

Conclusion

While we've theoretically pinpointed the optimal thread count, real-world scenarios often throw curveballs. Boosting threads can hike up memory usage, among other potential challenges. The golden strategy? Set a ballpark range, tinker within it, and tailor the perfect concurrency setting for your unique app.